The challenge: not everything can be automated

Conversational analysis platforms like Raisetalk allow you to automatically evaluate hundreds of criteria on each interaction: greeting quality, empathy, reformulation of customer needs, commercial proposal...

But some elements remain impossible to detect automatically, because they simply are not in the conversation, particularly for compliance with your internal processes:

- did the agent properly create the ticket in your CRM?

- Did they follow the planned escalation procedure?

- Did they validate the customer's identity according to your security protocol?

These verifications require the intervention of a supervisor who accesses your business tools.

Until now, this meant managing two evaluation systems in parallel, with all the risks of inconsistency and time waste that this entails.

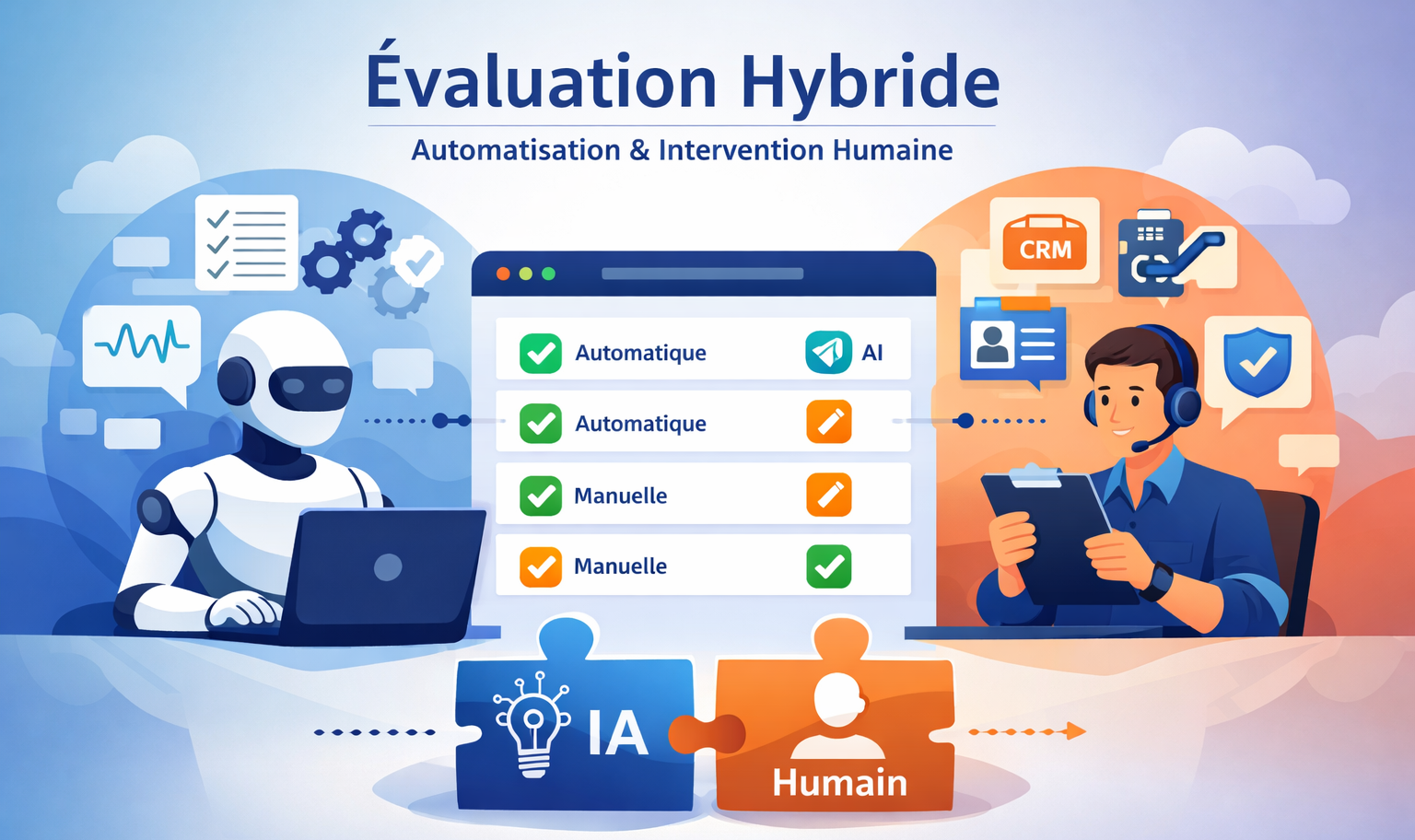

The solution: hybrid analysis

Raisetalk now offers to combine automatic and manual evaluation within the same analysis grid.

For each criterion in your grid, you simply define the desired evaluation mode: automatic (by AI) or manual (by a supervisor).

➔ This approach offers total flexibility: you maintain the efficiency of automation for criteria detectable in the conversation, while integrating human expertise where it is essential.

How does it work?

Simple configuration

When creating or modifying a criterion in your evaluation grid, select "Manual" in the evaluation field.

➔ Other parameters (title, weighting, rating scale) are configured as usual.

Collaborative evaluation

Supervisors complete manual criteria directly in the Raisetalk interface.

➔ This evaluation can be done in multiple sessions, allowing different managers to be involved if necessary.

Mandatory justification

As with automatic evaluations, each manual rating comes with a comment.

➔ The agent thus receives complete and constructive feedback to improve.

Modification history

Each version of the analysis is preserved and accessible, ensuring total traceability of evaluations.

Tracking incomplete analyses

Easily identify conversations where some criteria remain to be evaluated by filtering on the "N/A" rating.

Concrete benefits

This feature addresses a need expressed by many quality managers: having a unified view of performance, without multiplying tools and exports.

Your teams gain efficiency by centralizing all evaluations in one place.

Your agents benefit from consistent and complete feedback.

And you maintain a global view of quality, whether it concerns conversational criteria or compliance with your business processes.